Being a data analyst in HR in 2010 was a Sisyphean task in an organization using 20th-century technology. Getting HR data was hard enough. Freeing up IT resources to create data views and OLAP cubes was a weeks-long wait. Finance squinted with suspicion at any data request.

What made it a cheerful task was the mission: make sense of HR reporting with the data and tools you have. The resources were an Oracle HRIS, stand-alone talent management applications, an understaffed IT group, Microsoft Office, and SharePoint.

Just like organizations of today, scattered and inaccurate data were significant obstacles. Working through the dirty data was a forensic challenge, but along the way, new realizations dawned.

Data doesn’t have to be perfect to be useful.

Suppose you needed information on average time in pay grade but discovered that 13% of promotion dates could be in error by as much as a week. If you are reporting average time in grade by number of years, does a week matter if you are 95% confident that 87% of records are accurate?

Most decisions do not require pinpoint accuracy.

In one example, labor negotiators needed the annual cost of each pay type for each group of covered employees. But when employees transferred from one group to another, payroll items were sometimes charged to the unit for a part of a pay period. When we showed the negotiators how infrequent those occurrences were, they decided it didn’t affect their work.

As we explained in this article, it is possible to make certain assumptions about a large population or one of unknown size with a sample of only five. There is a 93.75% probability that the median of the population is between the highest and lowest values in the sample.[1]

Use only the data you need.

During the early hype of big data analytics, some companies dumped massive amounts of data into data warehouses and data lakes, then tried to make sense of it. Some data scientists proposed that the right way to do analytics was to collect as many data as possible, analyze it, and “let the data tell the story.” Years later, business leaders complained they weren’t getting much insight into their business.

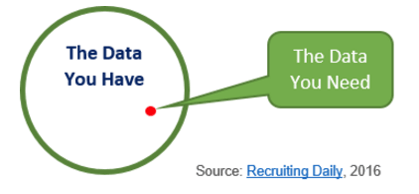

For almost all business decisions, the data you have is many times greater than what you need.

To determine what data you need, define the business problem first. Ask what decision you will make with the information. Data that does not support a business decision or a regulatory requirement has no value. Usually, only a few things matter---but they usually matter a lot.[2]

Stop errors at the source.

Our first principle of data quality is to stop creating bad data. For example, an Accounts Payable office mailed final paychecks to former employees, but many with apartment or lot numbers were not delivered. The cost and aggravation of handling angry phone calls, correcting addresses, and reissuing checks were a burden.

We cleaned up the addresses with a simple audit report to administrators, but the problem persisted. When we investigated, we found that the AP check writer system was truncating address lines with over 30 characters, but self-service address change allowed 60 characters—a problem easily solved without replacing hardware.

Get Started with the Data You Have

Dirty data is often cited as a barrier to data analytics. Our experience tells us that most decisions are based on estimates, and data does not have to be 100% accurate to inform you that one decision has a probability of being more successful than another.

Reference:

1. Hubbard, Douglas W. “How to Measure Anything: Finding the value of “INTANGIBLES” in Business, 3rd ed. John Wiley & Sons, Inc. Hoboken, New Jersey. 2014.

2. Hubbard, p. 44.

Pixentia is a full-service technology company dedicated to helping clients solve business problems, improve the capability of their people, and achieve better results.